by Anthony Wilkins Issue 107 - November 2015

The world of broadcast audio is on the verge of a major revolution. Numerous 3D Immersive formats are under development and will find their way into the mainstream of broadcast production and distribution in the near future. Unlike the world of relatively constrained channel based coding as we are accustomed to (most commonly Left / Right for Stereo and Left / Centre / Right / Surround Left / Surround Right + LFE or Low Frequency Effects for surround), these new codecs will support more channels and/or object based audio coding. For the end consumer, there will be two major benefits from this new approach, a greater sense of involvement or immersion, and a degree of personalisation.

Greater involvement will come from an increased channel count typically by the addition of overhead height channels. Several proponents including the MPEG-H Alliance (Fraunhofer IIS, Technicolor and Qualcomm), Dolby and DTS have successfully demonstrated broadcast compatible systems based on 5.1.4 or 7.1.4 layouts where 4 overhead speakers have augmented the traditional 6 or 8 floor level speakers. This creates a 3D sound image where the viewer or listener is immersed in audio in all dimensions.

Object based audio will give the end user the option to personalise their experience by selecting from a number of audio sources and controlling the level and maybe even the position in the mix. In object based audio, an object is essentially an audio stream with accompanying descriptive metadata. The metadata carries information about where the engineer placed the object in the mix and at what level, and is used to re-create, or render, the audio experience for the end user. Objects may be static and placed at a fixed point in the mix (for example dialog) or dynamic where they are panned across the 3 dimensional soundscape (maybe a plane flying overhead). Depending on restrictions and limitations decided at the point of content creation (and signaled in the descriptive metadata), the end user could select which audio source(s) they want to hear from an on-screen list and perhaps vary the level at which they are reproduced. Think of a sporting event where the options could include home or away commentary, crowd sounds, the referee or match officials voice or pitch side microphones at team ball games. At motor racing events examples could be commentary, drivers voice or pit crew communication.

Does this mean multiple speakers need to be installed to avail of these new benefits? Not necessarily, although of course the best experience will result from a full installation of discrete speakers at both floor level and overhead, however where this is not practical (most likely the majority of typical domestic situations), the final renderer will re-create the mix as accurately as possible based on the actual number and location of speakers the end user has. An increasing number of Soundbars that are designed to sit underneath a display are already available to create a surround experience without consuming valuable space. Better still, a wall mounted Soundframe completely surrounds the display and can give an incredibly realistic reproduction from the height channels.

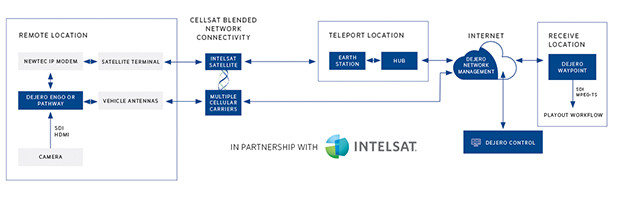

What does this all mean for the broadcaster? A complete re-build of existing facilities and a total re-think about the audio processing equipment required for outside broadcast vehicles? Well, if we get it right, it should entail just relatively minimal changes to overall workflow and hardware, but inevitably some additions to production and distribution equipment. In the case of object based audio, the metadata appropriate for the final codec needs to be created and added to the audio for insertion into a conventional SDI stream, and the engineer needs to be able to monitor this complex mix (and all the potential downmix variations) to ensure that the distribution chain is fed with all available objects correctly. Loudness levels should also be considered in respect of existing standards and there should be provision for local insertion of content at any point in the chain. Although this all sounds somewhat futuristic, the fact is that the broadcast industry is already seriously considering the potential adoption of these concepts. At Jnger Audio we have already worked closely with Fraunhofer IIS to design a prototype Multichannel Monitoring and Authoring unit (MMA) that is capable of directly interfacing with existing mixing consoles via AES, SDI, MADI, Dante or even analog audio formats. At NAB 2015 there was a comprehensive demonstration of a complete object audio based end-to-end broadcast chain including the original outside broadcast capture, distribution via the network operation centre, insertion of local content from an affiliate station and finally reception at the end-users home with full control of object selection and audio reproduction via Soundframe. At all stages of the distribution chain, the audio could be monitored to verify metadata parameters, downmix(s) could be auditioned and loudness levels could be checked and corrected if required.

Although that particular demonstration was using the MPEG-H codec, it is important to understand that the monitoring and authoring unit is agnostic, or independent, of the final codec used for transmission. Such a device would be easily adaptable to create and monitor metadata for any of the existing or future 3D Immersive formats irrespective of vendor or developer.

www.jungeraudio.com